Introduction

1. Objectives

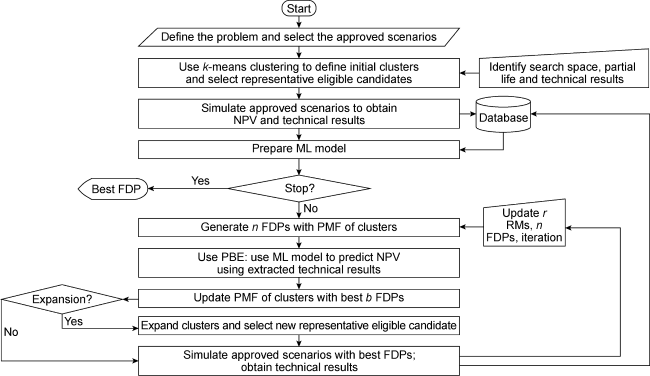

2. Methodology

Fig. 1. Flowchart of the algorithm to optimize FDP. |

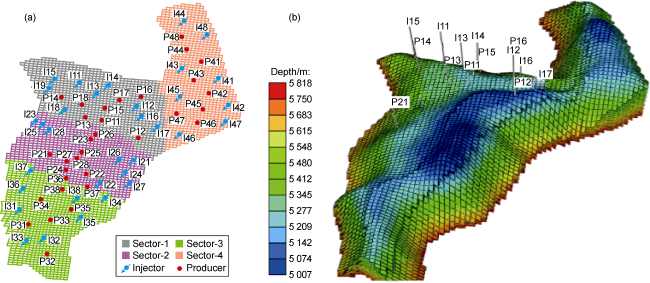

3. Application and results

3.1. Benchmark case

Fig. 2. (a) Sectors distribution and (b) grid-map of UNISIM-III-2022 (the first and second numbers in the well’s nomenclature refer to the sector and drilling sequence, respectively). |

Table 1. Summary of relevant activities and information for UNISIM-Ⅲ-2022 |

| Period/d | Drilling, completion and production activity | Remarks |

|---|---|---|

| 0-1219 | 6 producers (P11-P16) and 7 injectors (I11-I17) in S1; producer P21 in S2 | Extended well test performed in S1 in the first year followed an idle 1.4 years |

| 1308-2039 | 4 producers (P22-P25) and 4 injectors (I21-I24) in S2; 2 producers and 2 injectors in both S3 and S4 | |

| 2039-2315 | 1 producer (P26) and 2 injectors (I25, I26) in S2; 2 producers and 2 injectors in both S3 and S4 | |

| 2404-2769 | 2 producers and 2 injectors in both S3 and S4 | |

| 2769-3134 | 2 producers and 2 injectors in S1 | All wells drilled in S1 |

| 3592-3957 | 2 producers and 2 injectors in S2, S3, and S4 each | All wells drilled in S2, S3, and S4 |

| 4322 | ~39% contractual life | |

| 5053 | ~46% contractual life | |

| 11 019 | Field abandonment |

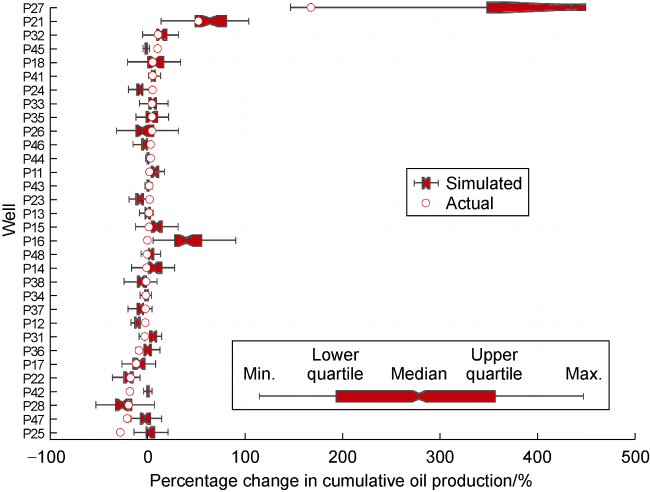

3.2. Cycle 1 of CLFD

Fig. 3. Percentage change in cumulative oil production of respective wells at the end of Cycle 1 (to make the graph legible, we truncated the result of P27 as the maximum change was observed as high as 1500%). |

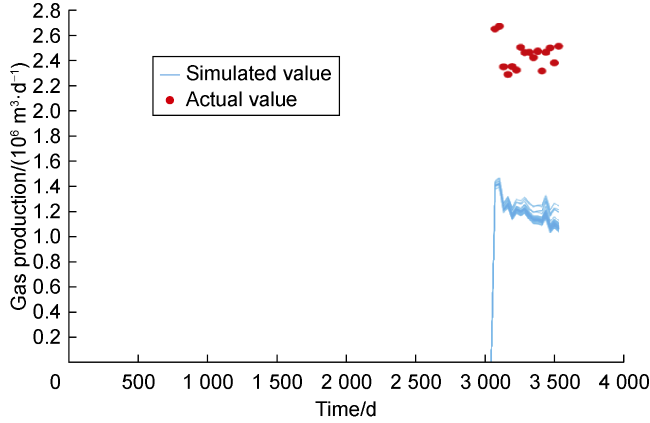

3.3. Cycle 3 of CLFD

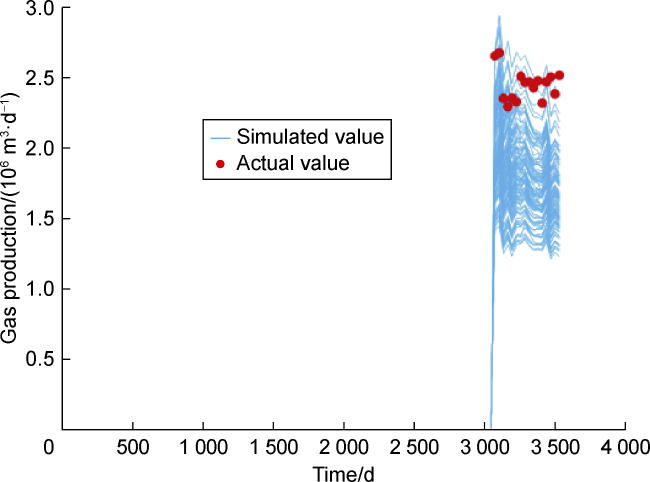

Fig. 4. Gas production of P17 after the initial data assimilation process. |

Fig. 5. Gas production of P17 after revising data assimilation. |

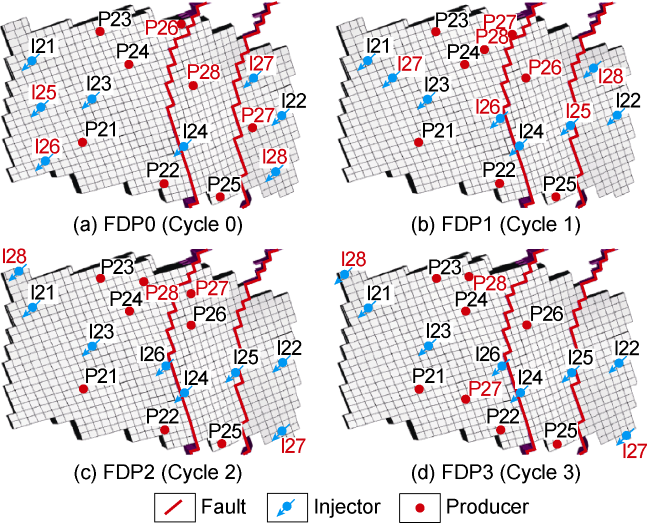

Fig. 6. Best FDP obtained at the end of each cycle (to-be-drilled wells are highlighted in red). |

3.4. Outcome

Table 3. Details of our workflow in all CLFD cycles |

| Cycle | Implementation of workflow | Probability of success/% | Execution time/d | Range of EVoCL/ USD 108 | EVoCL (for ensemble of AS)/USD 108 | VoCL/USD 108 | ||||

|---|---|---|---|---|---|---|---|---|---|---|

| Action | Update input | Data assimilation | Approved scenarios (AS) | Optimization | ||||||

| 1 | Obtained 0+7+3+3 new well logs (from S1, S2, S3 and S4, respectively); Generated Mprior,1 using a total of 27 vertical well-logs | Noisy production data over a period of 1978 d (18% of the field’s life) was used to obtain Mpost,1 | 44 | FDP0 was optimized to obtain FDP1. We used 26 (59% of the AS) scenarios to optimize using 10 iterations of CLEO. Complete ensemble was improved. | 99.5 | 32 | 0.3-5.0 | 2.5 | 0.8 | |

| 2 | Obtained 0+4+5+5 new well logs (from S1, S2, S3, and S4, respectively); Generated Mprior,2 using a total of 41 vertical well-logs | Noisy production data over a period of 2404 d (22% of the field’s life) was used to obtain Mpost,2 | 48 | FDP1 was optimized to obtain FDP2. We used 30 (62.5% of the AS) scenarios to optimize using 7 iterations of CLEO. 94% of the ensemble was improved. | 91.3 | 27 | -0.4-1.8 | 0.7 | 1.1 | |

| 3 | Obtained 4+0+4+4 new well logs (from S1, S2, S3, and S4, respectively); Generated Mprior,3 using a total of 53 vertical well-logs | Range of fault transmissibility was modified from 0-1 to 0- 0.5 for all four faults | Noisy production data over a period of 3531 d (32% of the field’s life) was used to obtain Mpost,3 | 40 | FDP2 was optimized to obtain FDP3. We used 24 (60% of the AS) scenarios to optimize using 7 iterations of CLEO. 90% of the ensemble was improved. | 88.1 | 34 | -0.3-1.6 | 0.5 | 0.8 |

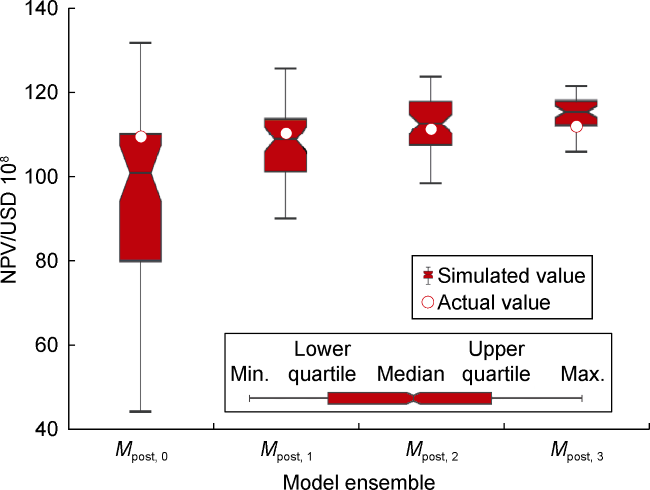

Fig. 7. Simulated and actual NPVs for all cycles. |

Table 4. Predicted recovery factor at the end of all cycles |

| Cycle | Recovery factor/% | ||||

|---|---|---|---|---|---|

| S1 | S2 | S3 | S4 | Entire field | |

| 0 | 36.3 | 31.4 | 36.0 | 29.3 | 33.1 |

| 1 | 36.3 | 31.6 | 36.3 | 29.3 | 33.2 |

| 2 | 37.1 | 31.7 | 36.3 | 29.6 | 33.5 |

| 3 | 36.9 | 33.0 | 36.3 | 29.2 | 33.7 |

4. Discussion and recommendations

Table 5. Key differences between Loomba et al. [1] and this work |

| Step | Loomba et al. [1] | This work | Remarks |

|---|---|---|---|

| Action | Generated 500 images | Generated 100 images | 80% reduction in time |

| Update inputs | Generated 500 scenarios with updated inputs | Generated 100 scenarios with updated inputs | 80% reduction in time |

| Update inputs | Generated 500 scenarios with updated inputs | Generated 100 scenarios with updated inputs | 80% reduction in time |

| Approved scenarios | ~50% scenarios approved | ~45% scenarios approved | A larger ensemble of approved scenarios is only justified if RMs can be selected without any simulation |

| Select RMs | Selected 9 RMs; only these RMs were used for optimization | Selected 3-12 RMs per iteration; different subset of RMs per iteration | Varying RMs helps reduce time and include more scenarios to make better decisions |

| Optimize | IDLHC was used to optimize well-place- ment using 1600 experiments per RM | Combined ML model with CLEO algorithm | Over 90% reduction in time |

4.1. Efficiency improvement and risk control

4.2. Considerations in practical implementation

4.3. Results of implementation

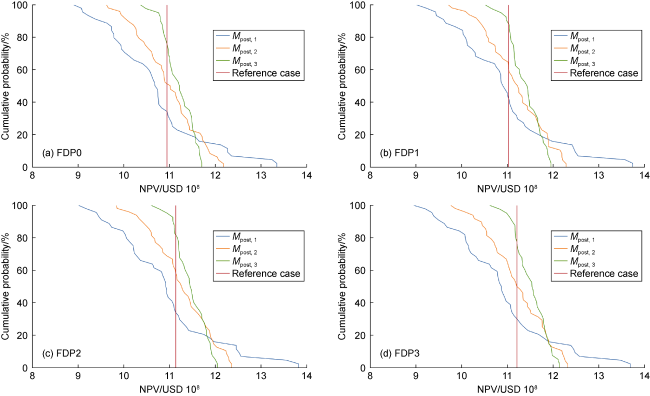

Fig. 8. Results of revised FDP in Mpost,1, Mpost,2, Mpost,3 and reference case. |