Introduction

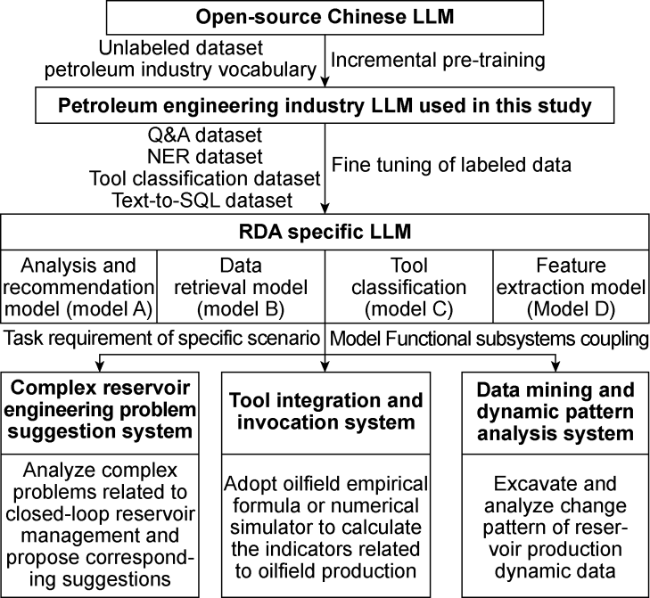

1. The approach to apply LLMs in RDA

Fig. 1. Schematic approach of constructing a scenario-specific LLM for RDA. |

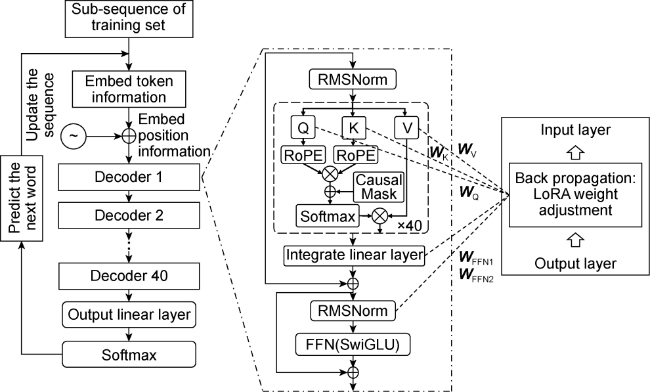

1.1. Incremental pre-training

Fig. 2. Model structure and parameter adjustment method of incremental pre-training. LoRA—low rank adaptation technology used to fine tune large language models; RMSNorm—root mean square normalization layer; Q, K, V—value vector, key vector, query vector calculation layer; RoPE—rotation position code; Causal Mask—causal mask; Softmax—flexible maximum transfer function; FNN—feedforward neural network layer; SwiGLU—gated linear unit activation function. |

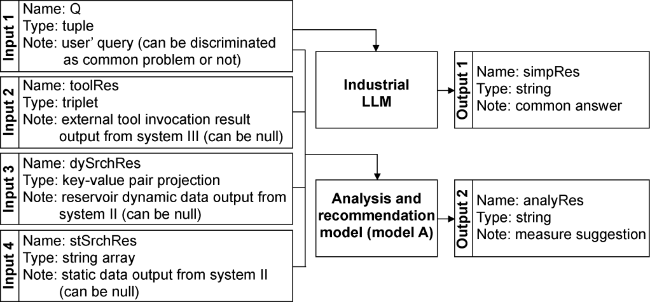

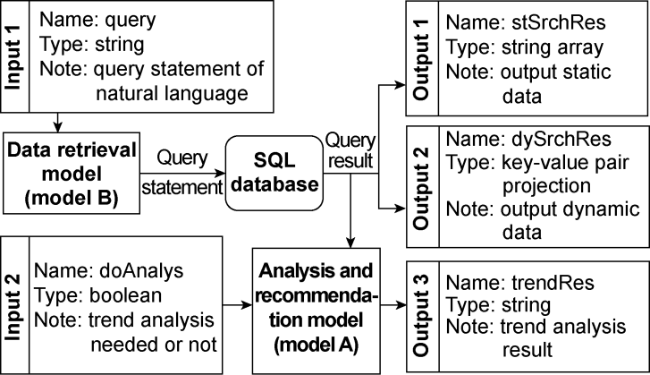

1.2. Fine-Tuning for subsystem tasks

Fig. 3. Complex Reservoir Engineering Problem Suggestion System (System I). AnalyRes, simplRes—system I output; DySrchRes, stSrchRes—system II output and system I input; Q—system I input; ToolRes—system III output and system I input. |

Fig. 4. Structure of Data Mining and Dynamic Pattern Analysis System (System II). DoAnalysis, query—System II input; StSrchRes—system II output and system I and III input; TrendRes—System II output; DySrchRes—system II output and system I and III input. |

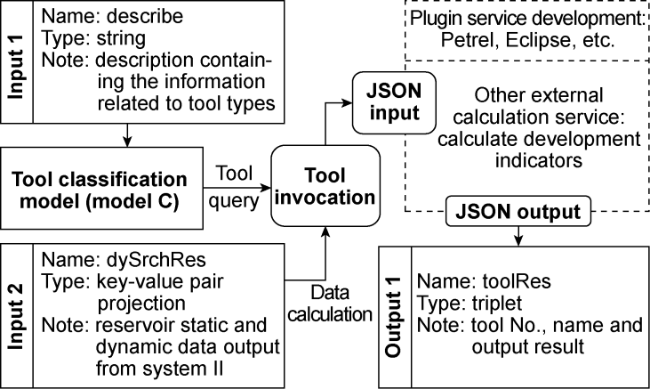

Fig. 5. Structure of Tool Integration and Invocation System (System III). DySrchRes, describe—system III input; ToolRes—system III output and system I input. |

1.3. Coupling of functional subsystems

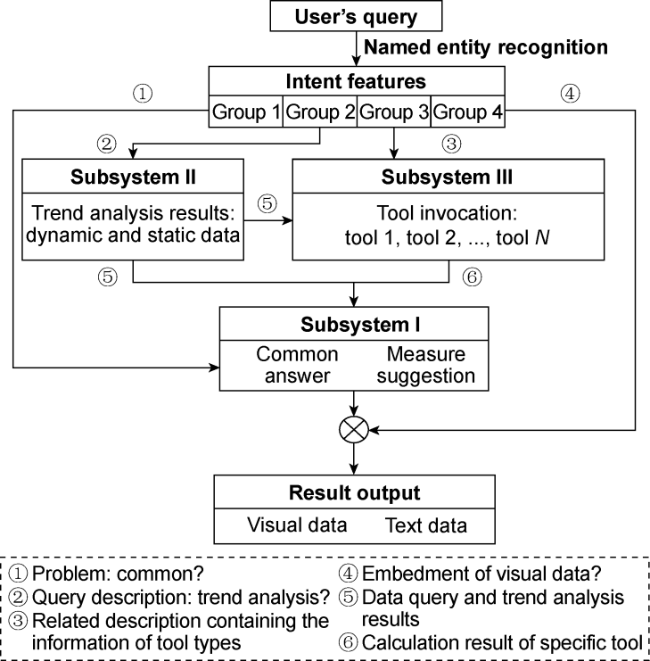

Fig. 6. Functional subsystem coupling method for LLM for RDA (N—number of tools). |

2. Fine-tuning and testing of LLMs for RDA

2.1. Fine-tuning and testing of the analysis and recommendation model

Table 1. QA performance testing of the analysis and suggestion model |

| Experts | Total samples | Relevant dimensions | Accurate dimensions | Complete dimensions | Fluent dimensions | ||||

|---|---|---|---|---|---|---|---|---|---|

| Number of exact samples | Accuracy/ % | Number of exact samples | Accuracy/ % | Number of exact samples | Accuracy/ % | Number of exact samples | Accuracy/ % | ||

| Expert 1 | 330 | 307 | 93.0 | 295 | 89.4 | 326 | 99.0 | 330 | 100.0 |

| Expert 2 | 330 | 315 | 95.5 | 297 | 90.0 | 324 | 98.0 | 328 | 99.4 |

| Expert 3 | 330 | 298 | 90.3 | 282 | 85.5 | 310 | 93.9 | 326 | 98.8 |

| Comprehensive | 330 | 92.9 | 88.3 | 96.7 | 99.4 | ||||

2.2. Fine-tuning and testing of the feature extraction model

Table 2. NER performance test of NER Model |

| Named entities | Definition | Total number of samples | Entity identification | Numeric variable entity identification | Semantic recognition | |||

|---|---|---|---|---|---|---|---|---|

| Number of correct samples | Recognizable rate/% | Number of correct samples | Accuracy/ % | Number of correct samples | Accuracy/ % | |||

| Well_id | Well No. | 1 300 | 1 275 | 98.1 | 1 247 | 96.5 | ||

| Well_layer | Layer No. | 1 300 | 1 235 | 95.0 | 1 222 | 94.0 | ||

| Data_from | Start time | 1 300 | 1 287 | 99.0 | 1 274 | 98.0 | ||

| Data_to | End time | 1 300 | 1 287 | 99.0 | 1 274 | 98.0 | ||

| Period | Stage (e.g., annually) | 1 300 | 1 261 | 97.0 | 1 274 | 98.0 | ||

| Target | Primary issues (e.g., oil production) | 1 300 | 1 209 | 93.0 | 1 189 | 91.5 | ||

| Wanted_ type | Type of display (e.g., curved graph) | 1 300 | 1 235 | 95.0 | 1 168 | 89.8 | ||

2.3. Fine-tuning and testing of the data retrieval model

Table 3. Text-to-SQL performance test of Data Retrieval Model |

| Model accuracy standards | Matching situation before fine-tuning | Matching situation after fine-tuning | Implementation after fine-tuning | ||||

|---|---|---|---|---|---|---|---|

| Grading | Total number of samples | Number of exact samples | Accuracy/ % | Number of exact samples | Accuracy/ % | Number of exact samples | Accuracy/ % |

| Simple | 700 | 485 | 69.3 | 700 | 100.0 | 700 | 100.0 |

| Medium | 500 | 337 | 67.4 | 498 | 99.6 | 462 | 92.4 |

| Complex | 80 | 46 | 57.5 | 70 | 87.5 | 53 | 66.3 |

| Extremely difficult | 20 | 5 | 25.0 | 11 | 55.0 | 8 | 40.0 |

| Overall accuracy | 67.3 | 98.3 | 95.2 | ||||

2.4. Fine-tuning and testing of the tool classification model

Table 4. Three different methods of tool identification and their accuracy assessment |

| Identification tools | Number of exact samples | Accuracy/ % |

|---|---|---|

| NER direct recognition tool | 467 | 35.9 |

| Classification task direct discrimination tool | 423 | 32.5 |

| NER target+classification task discrimination tool | 1 161 | 89.3 |

3. Function testing of the LLM

3.1. Real-time retrieval and query of single-well injection and production data, and sorting and retrieval of single-well historical measure data

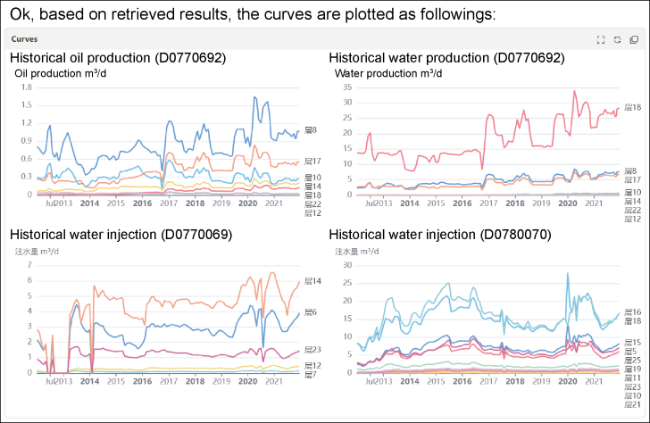

Fig. 7. Query and plotting results of historical injection and production data. |

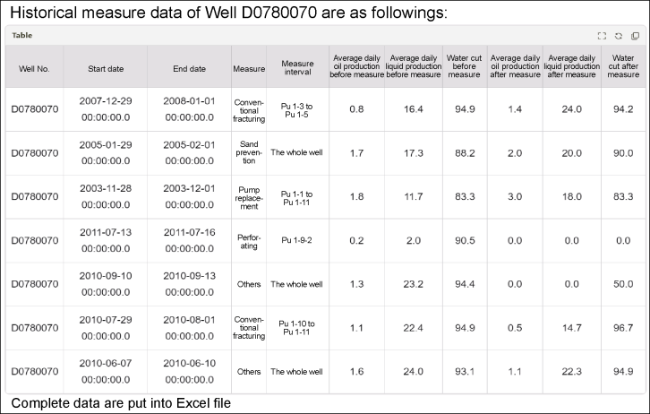

Fig. 8. Querying and printing results of well historical measure data. |

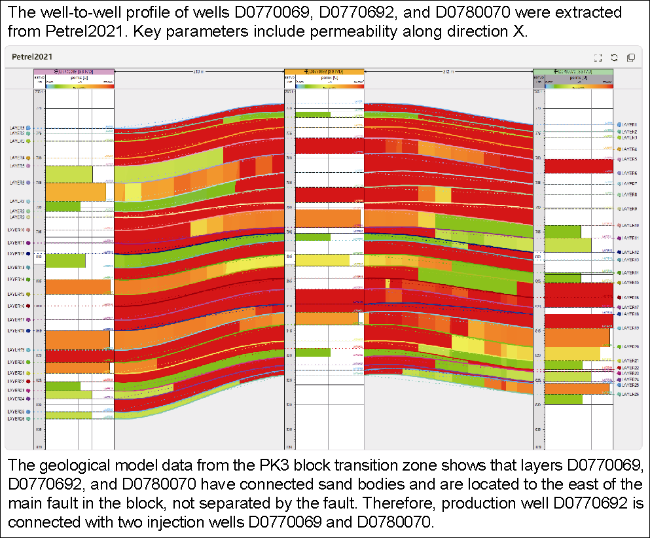

3.2. Invoking tools to map the well-to-well profiles and calculate key technical indicators

Fig. 9. Calling Petrel2021 software to draw the well-to-well profile and the injection and production connectivity analysis results based on the geological model data. |