In recent years, artificial intelligence technology has been applied in the field of oil and gas exploration and development

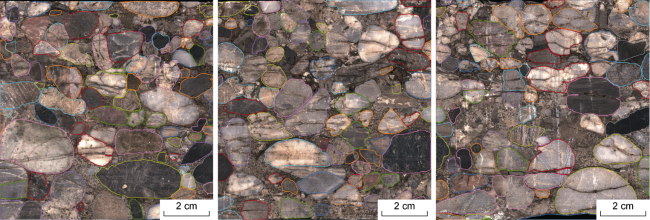

[1-2]. Scholars have conducted research on the quantitative analysis of rock structure. The existing studies mainly focus on rock particle segmentation and the quantitative characterization of rock structure parameters such as particle size and roundness. Rock particle segmentation is the foundation of intelligent rock structure evaluation, and image segmentation algorithms are widely used in rock particle segmentation of core images

[3-4]. Early methods mainly relied on traditional image processing algorithms

[5-6], which extracted particle edges based on shallow image features and had relatively poor robustness

[7-8]. In contrast, deep learning algorithms

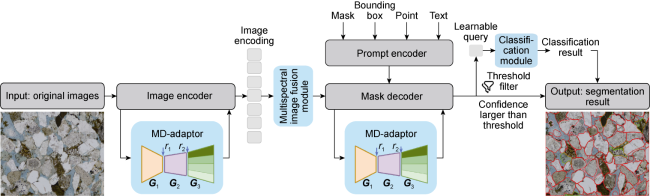

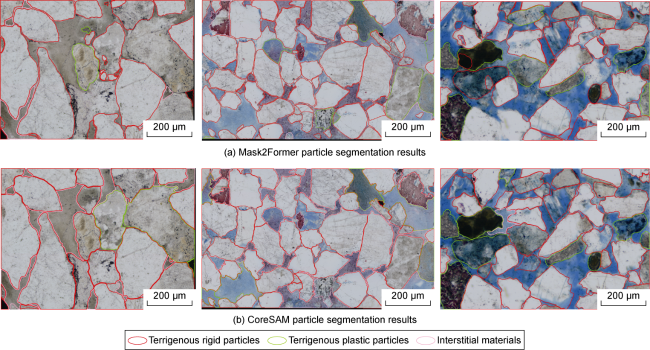

[9-10] can learn semantic features, not only extracting rock particle edges but also identifying their category attributes, making them the mainstream algorithms for rock particle segmentation

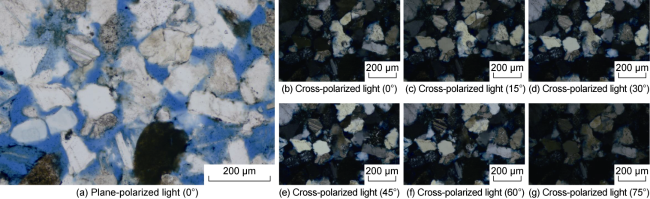

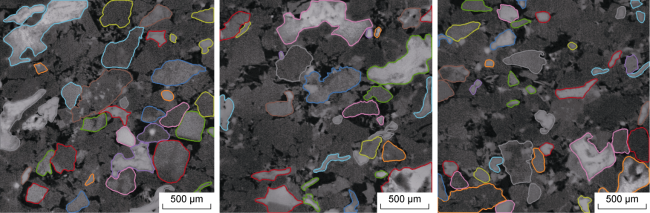

[11-13]. Most existing deep learning-based segmentations are implemented for single images. Ren et al.

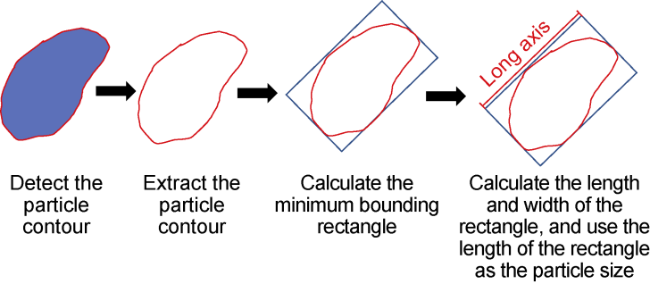

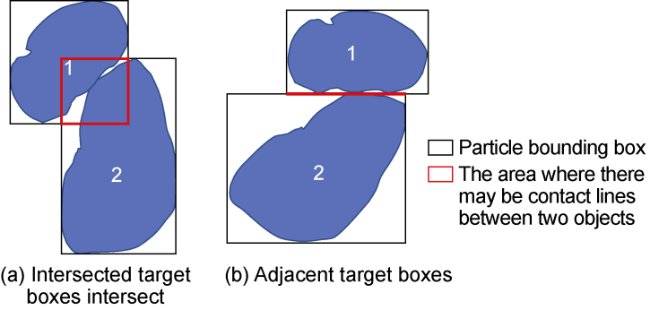

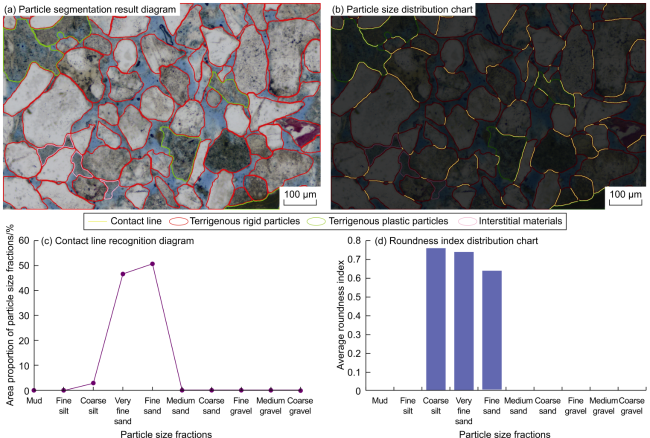

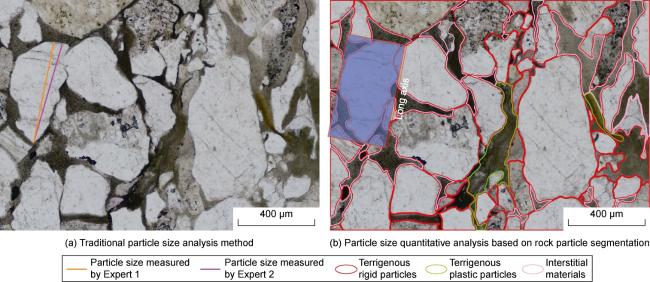

[14] innovatively proposed a new deep learning algorithm that uses microscopic images under plane-polarized light and multi-angle cross-polarized light as input to achieve rock particle segmentation using multiple images. Based on rock particle segmentation, quantitative characterization of rock structure parameters such as particle size

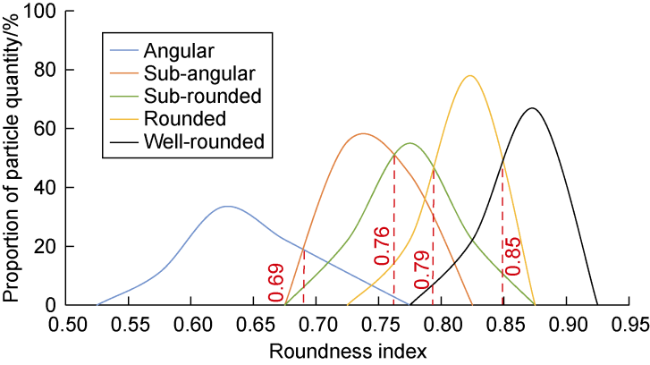

[15-16], sorting, and roundness

[17-20] can be achieved. Research on compaction mainly deals with the quantitative relationship between porosity and the degree of compaction, as well as the quantitative classification of compaction

[21], often in combination with computer image processing software. In the field of cementation research, current methods mainly rely on microscope observation and the construction of rock physics models

[22-23]. Although the quantitative research of rock structure has achieved certain results, it still has the following limitations. (1) Traditional deep learning models are affected by factors such as diagenesis, resulting in weak cross-sample generalization capability. (2) Existing particle segmentation algorithms do not fully utilize particle characteristics such as interference colors and extinction under multi-angle polarization, and the accuracy of particle segmentation needs improvement. (3) Rock structure parameters (compaction, cementation type) lack classification statistics and quantitative characterization methods and still rely on qualitative/semi-quantitative evaluation.