Recently, booming deep learning algorithms have inspired novel options for image processing

[19], digital rock reconstruction

[20], and modeling of multiple physical processes

[21-23]. One effective way involves supplementing unresolved pores into coarse-scale digital rock, which is performed on binary structures using neural networks

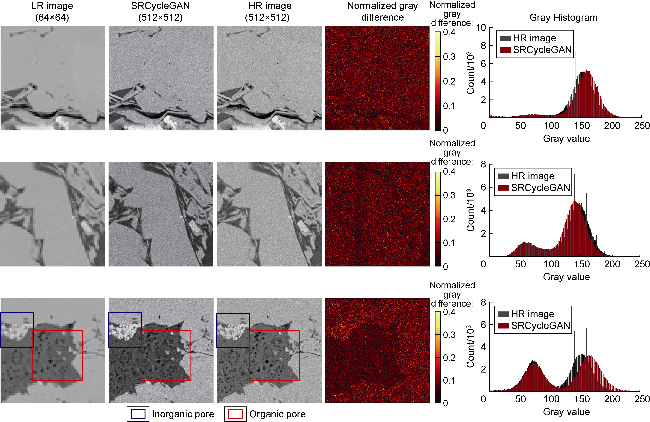

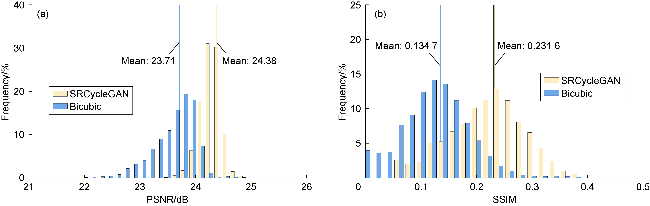

[24-25]. Another advanced approach is deep learning-based super-resolution (SR), which learns the end-to-end mapping between LR and HR images, effectively capturing the hierarchical representation and enhancing LR image quality

[19,26-27]. These CNN (convolutional neural network)-based SR (SRCNN) models perform well at noise reduction, deblurring, and recovering edge detail and sharpness, but lose the high-frequency texture details nonetheless. Thus, GAN-based super-resolution methods (SRGANs), Residual Channel Attention Network (RCAN), and transformer-based models, e.g., Efficient Attention Super-Resolution Transformer (EAST), were proposed, and they are capable of boosting resolution and recovering textures

[28-31]. Notably, training LR images are synthetically downsampled from HR images, inevitably resulting in a loss of noise and blur information compared to real-world images, and are not representative. Otherwise, paired HR and LR images are generally unavailable, and the HR images are limited in number due to time-consuming and expensive imaging at high resolution.